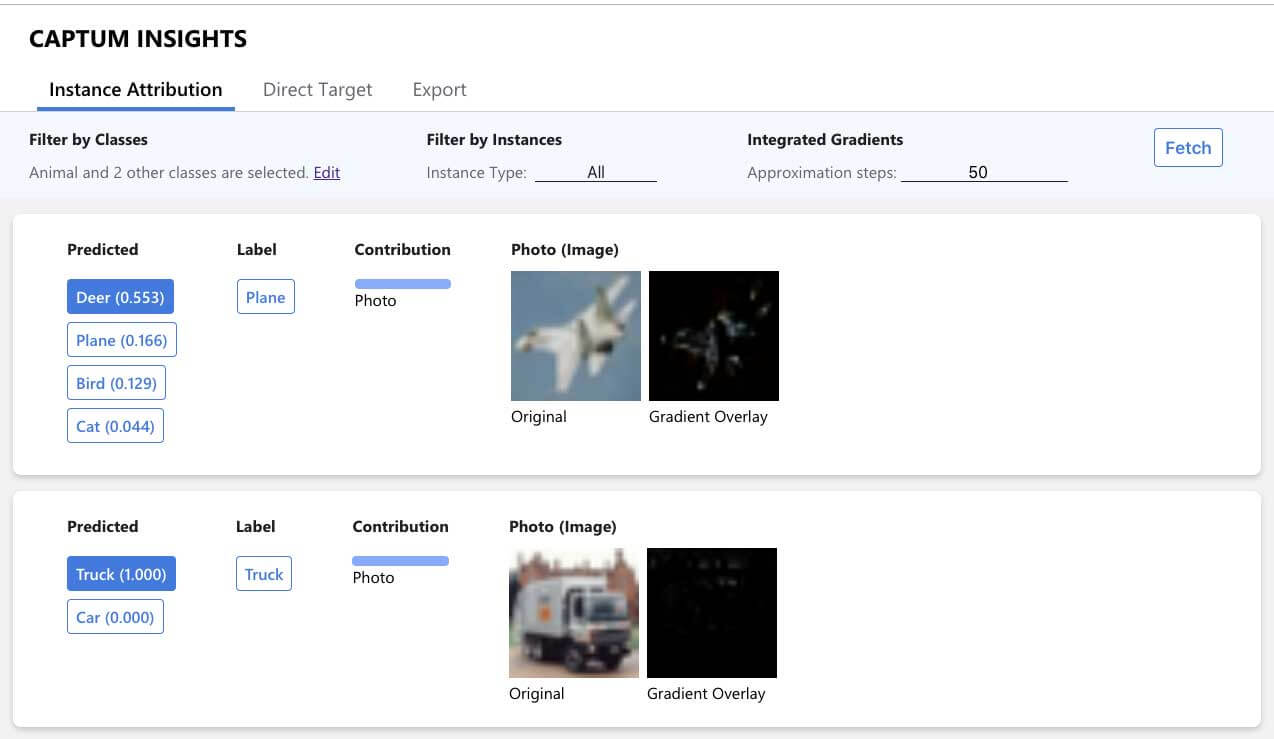

Interpreting PyTorch models with Captum

As models become more and more complex, it's becoming increasingly important to develop methods for interpreting the model's decisions. I have already covered the topic of model interpretability extensively over the last months, including posts about: Introduction to Machine Learning Model InterpretationHands-on Global Model InterpretationLocal Model Interpretation: An IntroductionThis article will cover Captum, a flexible, easy-to-use model interpretability library for PyTorch models, providing state-of-the-art tools for understanding how specific neurons and layers affect predictions. ...