This is my semester project for my Robotics, Systems and Control Master's at ETH Zurich, started in February 2026. The goal is to build a system that converts spoken language into continuous sign language motions for a humanoid robot.

Sign language interpretation is essential for accessibility but the availability of professional interpreters is limited. A robotic interpreter could offer a scalable alternative by translating speech into sign language in real time.

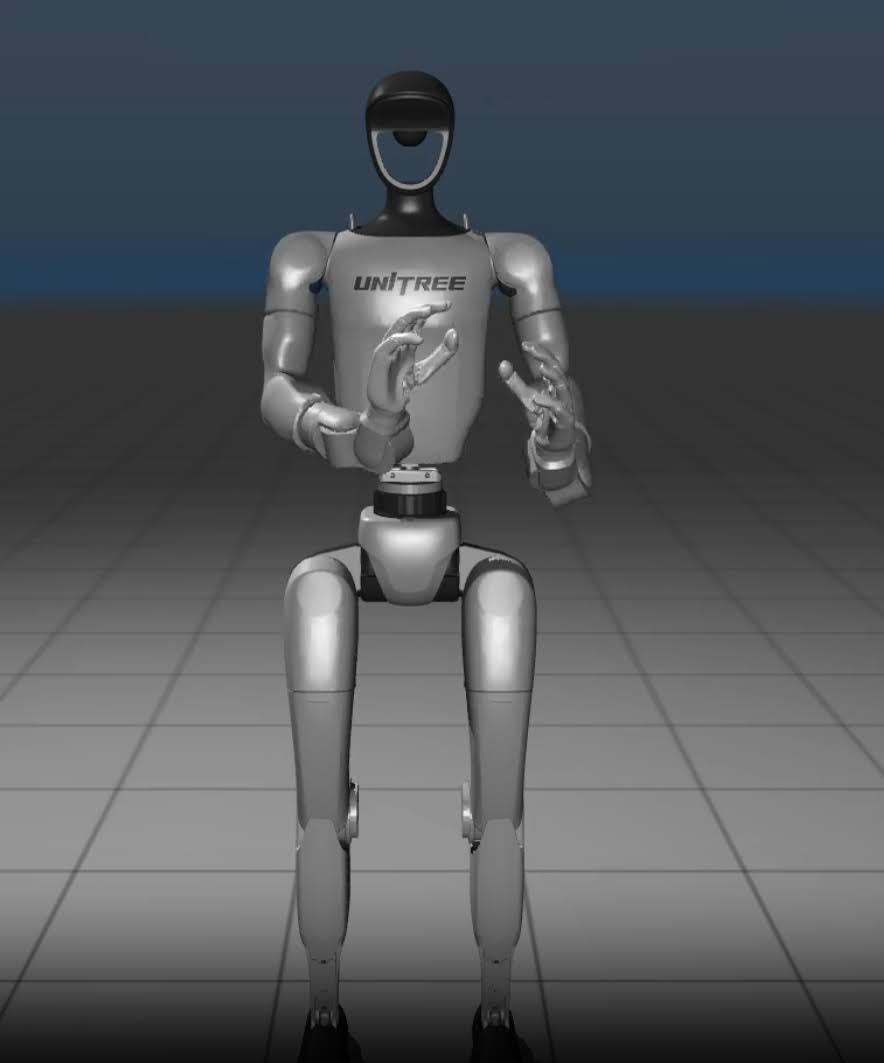

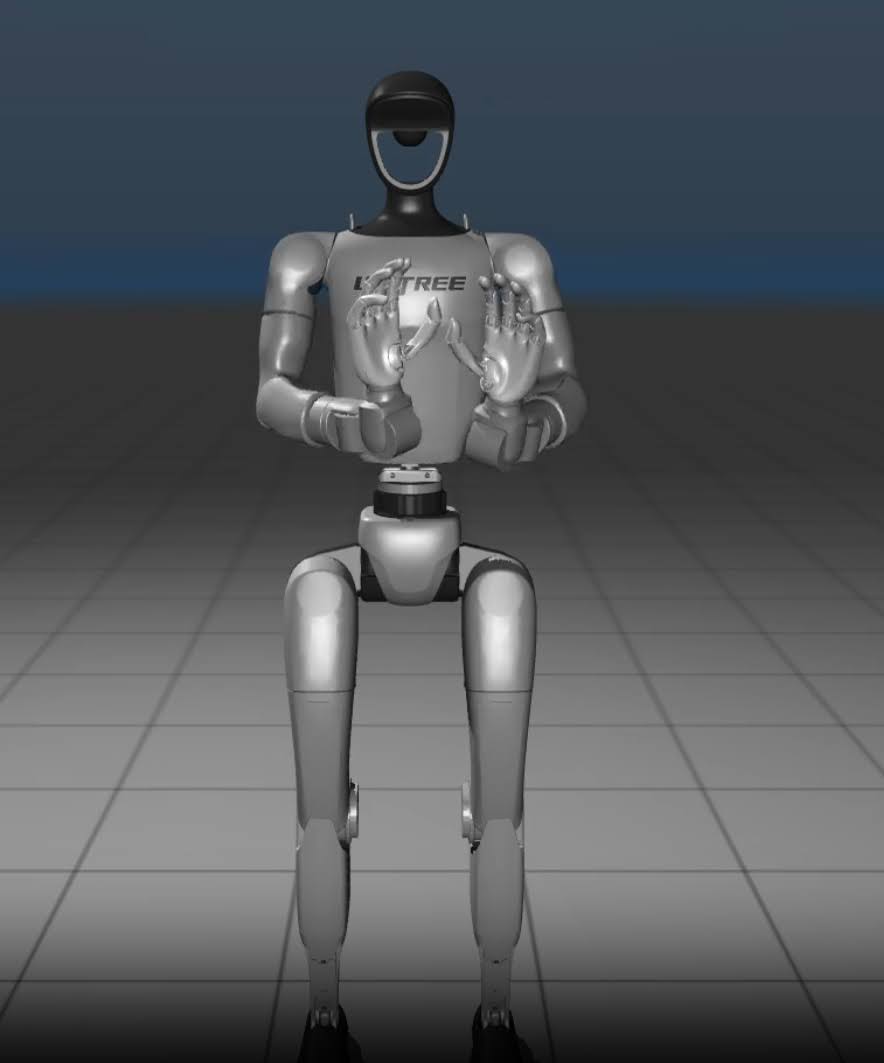

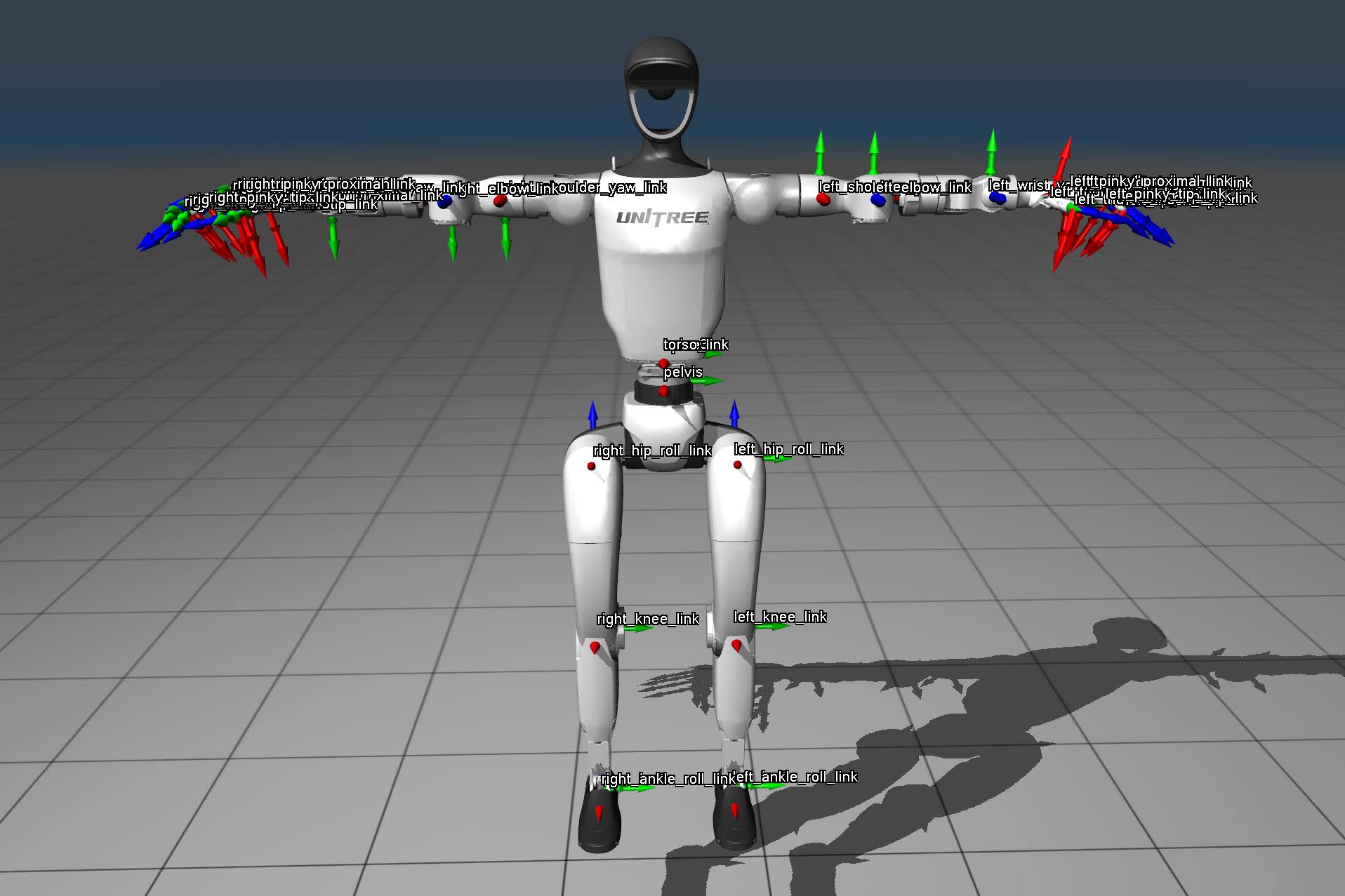

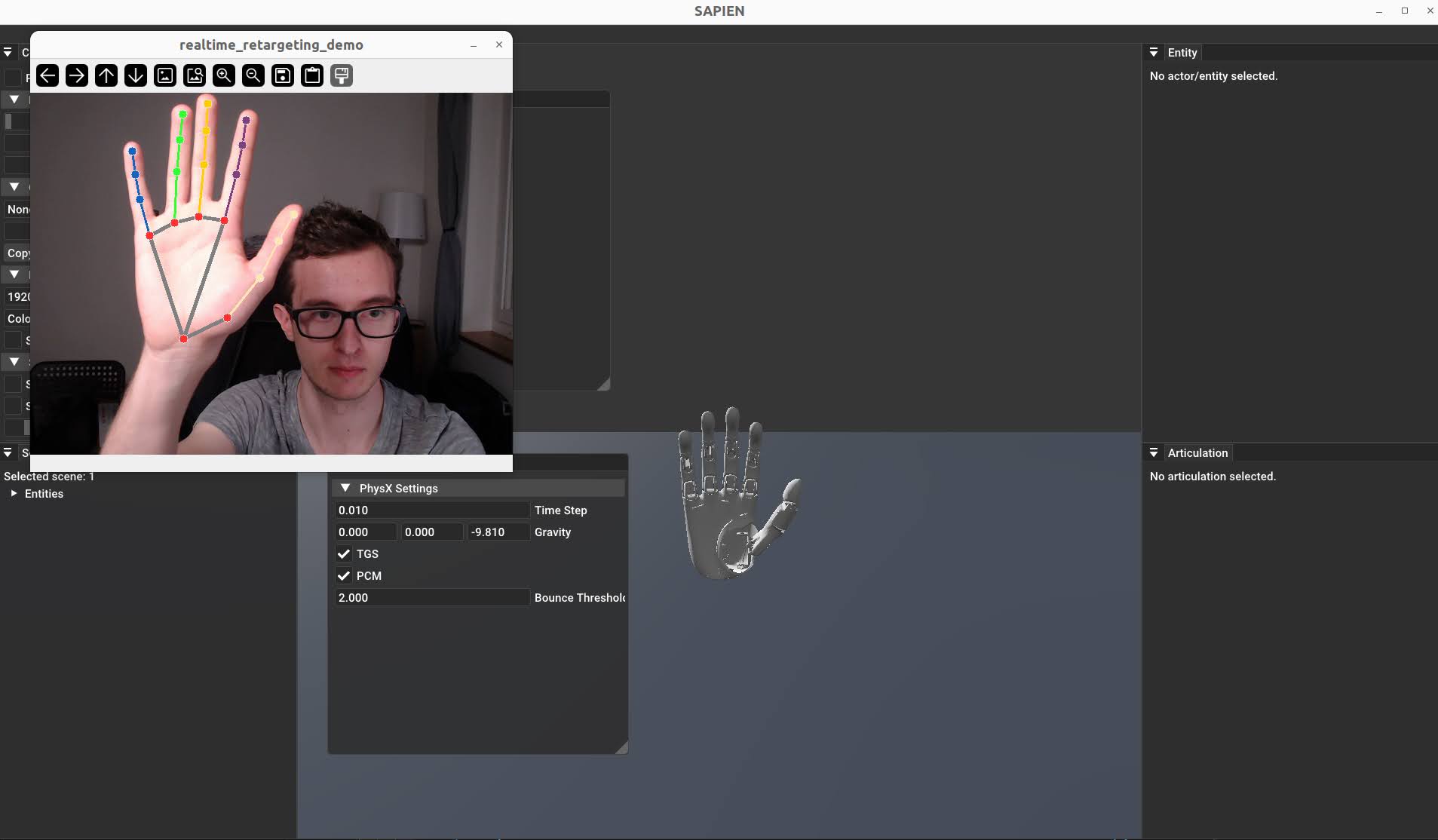

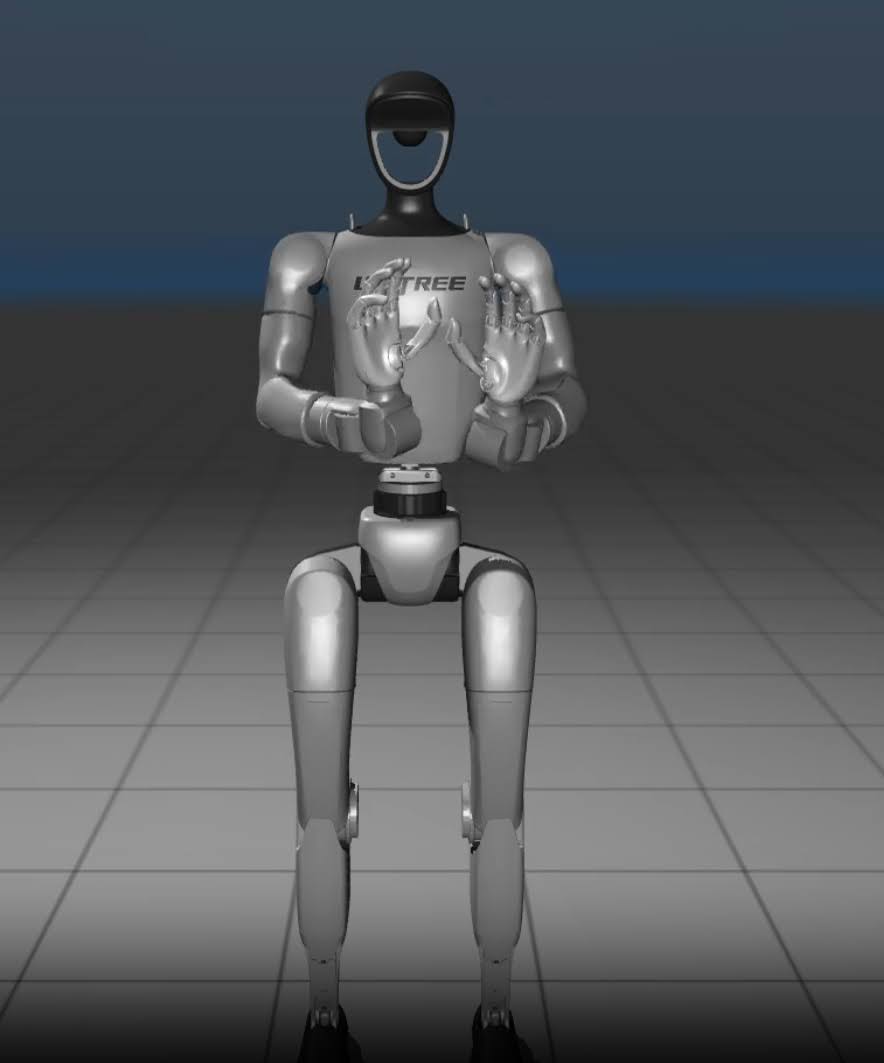

The approach follows a modular pipeline: sign language motions are first extracted from video demonstrations, then retargeted to the robot, and finally reproduced through a control policy trained via imitation learning in simulation. The key challenge is composing individual signs into fluid, continuous sentence-level signing rather than isolated word-level gestures. The target platform is the Unitree G1 humanoid equipped with Brainco Revo2 dexterous hands.

The project is ongoing. Below are some photos and videos of the current progress.